I can't find it documented anywhere, and in fact some resources I found specifically don't work with the \x notation. So it's some format unique to Javascript.

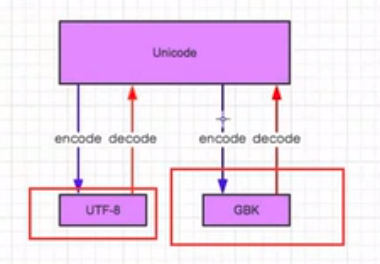

Encodings To summarize the previous section: a Unicode string is a sequence of code points, which are numbers from 0 through 0x10FFFF (1,114,111 decimal). That page you point to is using Javascript's 'unescape' method, which claims to use URL-encoding, but URL-encoding doesn't use the backslash codes.

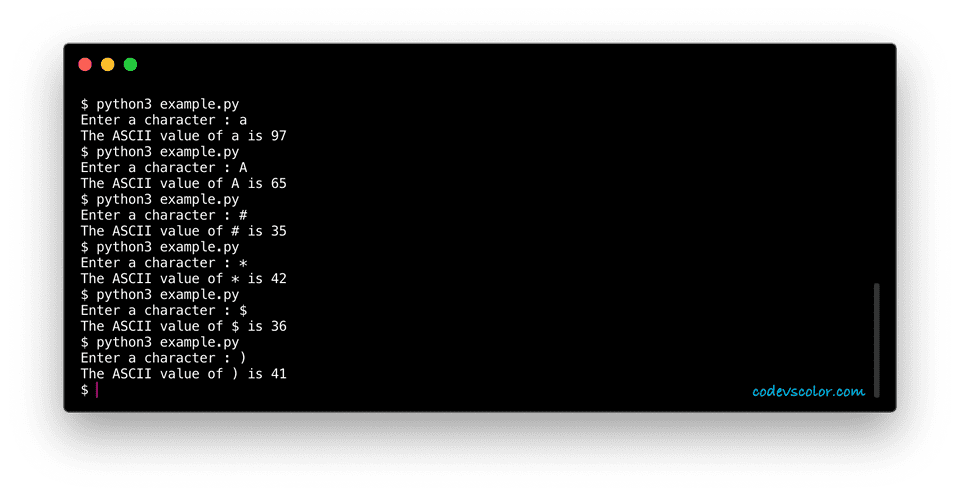

Managed to fix it with: verb.encode('latin-1'). Most Python code doesn’t need to worry about glyphs figuring out the correct glyph to display is generally the job of a GUI toolkit or a terminal’s font renderer. Updateįrom further searching and from your answers, it appears to be an issue to do with single 2-byte UTF-8 (é) characters being literally interpreted as two 1-byte latin-1 (é) characters (nothing to do with ASCII, my mistake). This returns the string 'pasé' where it should return 'pasé'. Verb = c().conjugate('pasar', 'preterite', 'indicative', 'yo') Code from spanishconjugator import Conjugator as c I am relatively new to python so I apologise if my explanation is unclear. Code 1 : Code to decode the string Python3 str 'geeksforgeeks' strenc str. For example an ASCII byte string is also by definition a UTF-8 byte string, can be decoded using UTF-8 and will produce. Is there a way to make python treat the string as if it were ASCII, such that I can decode it to unicode? Or is there a package that can serve this purpose.

As such, when I try to perform x.decode('utf-8') or x.encode('ascii'), neither work. Given this is python 3.8, the string is actually encoded in unicode, the package just seems to output it as if it were ASCII. I am using a package in python that returns a string using ASCII characters as opposed to unicode (eg.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed